Captivate SCORM Problems

We have been playing in depth lately with some some of the Captivate SCORM outputs in an effort to better understand the SCORM Compliance and where Captivate falls down in this area. What we have found is very interesting and needs to be explained in detail to understand.

The Problem

Until recently, anyone who wanted to author SCORM-compliant content had few choices. Not many authoring programs existed and the technical knowledge to create compliant content was and, in fact, still is beyond the reach of most training developers. Now there are many affordable, easy to use content authoring programs to create SCORM-compliant content that can be deployed to learning management systems (LMS). Adobe, a leader in the multimedia authoring and programming industry, has recently thrown their hat into the ring and released Adobe Captivate – a SCORM-compliant authoring tool that includes screen capture, simulation, automated testing and more.

Adobe Captivate and LMS Software Working Correctly

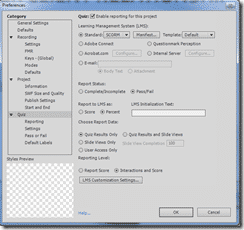

When exporting content from Adobe Captivate, you have the option of making your package SCORM 1.2 Compliant. Specifics of the SCORM specification could fill an entire book (in fact, it does!), so let’s just say that SCORM defines what must be included in a content package (certain files which contain certain information in a certain format) and the methods that the content package must use to communicate information (student name, score, etc.) to and from the LMS. The idea is that content authoring programs and learning management systems would all be programmed to comply with the spec and therefore be compatible with each other. Unfortunately, reality has not lived up to the vision.

The SCORM 1.2 specification is long, open to some interpretation and not always logical. Developers have had to make some assumptions and, at the same time, had to predict and hope that other developers made the same assumptions! Our experience with the workings of Adobe Captivate and the development or our own SCORM-compliant LMS has given us some insight to help you get the most out of Adobe Captivate and your LMS – even if it’s not our LMS! Note that we are only focusing on SCORM 1.2. The SCORM 1.3 specification was recently released, however most learning management systems and authoring tools, even those recently released, still support SCORM 1.2 and rightfully so.

Problem 1 – Setting Captivate to be SCORM 1.2 Compliant

If you export a Captivate package that does not have any graded questions in it, it will not be SCORM-compliant. I don’t mean that it just won’t track because it has no grade to send; I mean it is not compliant. In tracing method calls from Captivate lessons, we’ve found that a lesson with no questions will not make the required call to the LMS to initialize itself upon start-up. It will make the finalize call upon exit, however any compliant LMS will throw back an error when this happens. The spec dictates that a content package must initialize itself before it can finalize itself. Makes sense, right?

A tangential problem to this is that a lesson with no questions (even if the correct initialize and finalize calls are made) has no way to tell when it’s been completed, so it does not send that information to the LMS either. This secondary problem is not an issue of compliance as the SCORM specification does not require this information to be sent, but more an issue of usability. What’s the point of making a SCORM-compliant lesson and loading it into an LMS if you never find out when your users have completed it?

Solution 1 – Captivate SCORM Solved

The resolution to both these problems is easy – just make sure that you have a graded interaction in your lesson. It can be an interaction that is actually presented as such or even a button or hot spot that you are sure your users will click while viewing the lesson. The possibilities here are endless, so be sure to test your solution, but the bottom line is that there needs to at least one graded interaction in your lesson.

Problem 2 – Passing the Proper Lesson Status Value

Adobe Captivate lets you choose whether to report ‘pass/fail’ or ‘complete/incomplete’ values for lesson status, but this is not an arbitrary choice. The spec dictates that this shall be determined by the lesson after querying the LMS and deciding based upon the response it receives.

When publishing with Captivate, if you select complete/incomplete, and the user fails or fails to finish the lesson, the value of ‘incomplete’ will be reported to the LMS. In the event that the user completes or passes the lesson, the value of ‘complete’ will be reported to the LMS. Likewise, if you select pass/fail, then the value of ‘pass’ will be used instead of ‘complete’ and the value of ‘fail’ will be used instead of ‘incomplete’.

Additionally, Captivate lessons never query the LMS for the value of ‘credit’, which is the element that the lesson should be using to determine whether to use ‘complete/incomplete’ or ‘pass/fail’.

Problem 2 – Solution to Captivate Lesson Status Value

Solving this problem may or may not even be necessary – it’s a rather minor issue. The best thing to do is make sure that you coordinate the credit setting you use in the LMS with the lesson status value you select here. Lessons that are for credit should use ‘pass/fail’ and lessons that are not for credit should use ‘complete/incomplete’. However, one thing to note, and this takes us indirectly to Problem #3 and beyond, is that the spec dictates that the LMS revaluate the score and change this value if you have set a mastery score. We’ll come back to this when we get to Problem #4.

Problem 3 – Passing Score in the SCORM Format

The ‘Publish’ interface in Adobe Captivate lets you choose whether to report score as a raw value or as a percentage while the spec dictates that this value must be ‘normalized between 0 and 100′ (meaning it must be a percentage score). When you choose to report this value as a raw score, your lesson is not compliant.

Adobe tells us that they put this option for a very specific reason. The spec defines 3 values relating to score and all shall be normalized between 0 and 100 – minimum score, maximum score and what they call raw score (oddly enough, the spec calls it ‘raw score’ and at the same time dictates that it be normalized – no wonder everyone is confused!). Logically, since they are required to be normalized between 0 and 100, minimum score would always be 0 and maximum score would always be 100 so why even use them? Because of this confusion, Adobe decided to allow the content author to decide whether to report score as raw or normalized.

The problem occurs when you choose to report score as raw and then load your content into an LMS that has been implemented according to the SCORM spec because it will expect to receive score normalized. Confusion ensues!

You create a Captivate lesson and choose to report score

as a raw value. Your lesson has 5 questions and your user gets them all correct. Your lesson is going to report ’5′ as the score and a compliant LMS is going to interpret this as 5%. Of course, your lesson should also report a lesson status of ‘complete’ or ‘passed’ (see problem #2) which will truly confuse your user when they look at their stats and see that they passed/completed a lesson with a score of only 5%!

Problem 3 – Solution to Passing SCORM Score Correctly

This is an easy one. Unless you are certain that your LMS implements score as a raw value, always select ‘percentage’ to ensure that your lesson is compliant.

Problem 4 – Making it all Work

Take a deep breath, because problem #4 might get a little confusing. The SCORM specification instructs the LMS to change the lesson status (the same value discussed in problem #2) when certain conditions apply. When this happens, the LMS shall use the score to decide how to change the lesson status value. If you remember though, from problem #3, you may be reporting score as a non-compliant raw value, so the LMS may change the lesson status based on bad information.

To get a better understanding of this, let’s introduce mastery score. You set the mastery score by clicking the ‘Manifest’ button on the Publish Interface. mastery score is value stored in the manifest file that is included in the content package you load into the LMS. The LMS reads this value and stores it with the lesson. If you notice, Captivate instructs that this value should be between 0 and 100, or normalized.

Now the SCORM specification instructs the LMS that if mastery score is set, the lesson is being taken for credit and the lesson status is not ‘incomplete’, the LMS shall change the lesson status to the appropriate value (complete, incomplete, pass or fail) by comparing the score reported from the lesson and the mastery score that is defined in the manifest. This occurs even if the lesson has already passed a value for lesson status.

The first thing to notice is that you probably should set the mastery score to the same value that you set passing score. That way, if the LMS re-evaluates the lesson status, it will use the same value as the passing score that the lesson itself does.

Now let’s refer back to Problem #3. You had the option of reporting score as a raw value. If you chose that option, when the LMS performs this re-evaluation of lesson status, it is going to compare a raw score to the normalized mastery score. Since one value is normalized and the other is not, it should be clear that you will have some unexpected results from this.

Example

You create a Captivate lesson with 20 questions. You choose to report score as a raw value (non-complaint per Problem #3, but Captivate lets you do it), choose to use ‘pass/fail’ for lesson status, enter a mastery score of 80% and enter a passing score of 80%. Your user gets 17 questions correct.

When the lesson finalizes, the lesson reports ‘pass’ to the LMS for lesson status and ’17′ for score. Everything looks good until the LMS sees that there is a mastery score and therefore it must re-evaluate the lesson status. The LMS looks at score (’17′) and sees that it is less than mastery score (’80′), so it changes lesson status to ‘fail’. In fact, a lesson created with these settings will always have its lesson status re-evaluated to ‘fail’ by the LMS because even a perfect raw score (’20′) will always be less than the mastery score (’80′).

The root of the problem is that Captivate prompts you to enter mastery score normalized, but gives you the option to report score as a raw value. They need to be on the same scale for the re-evaluation by the LMS to work properly.

Solutions

Solution 1 – Don’t enter a mastery score. By doing this, the LMS will not re-evaluate the lesson status and you avoid the problem altogether. But don’t forget about Problem #3 and its solution.

Solutions 2 – Make sure that mastery score and score are both normalized by choosing to report score as a ‘percentage.’ You’ll notice that this is also the solution to Problem #3. If you have confirmed that your LMS expects to receive score as raw, then use Solution #3.

Solution 3 – If you must report score as a raw value, then be sure to enter a raw value for mastery score. In our scenario, instead of entering ’80′ for mastery score, you would enter ’17′. That way, when the LMS re-evaluates lesson status, both score and mastery score are on the same scale and the calculation is done correctly. While technically incorrect since the spec dictates that mastery score be normalized, we won’t worry about it because you’d only use this solution in the case that your LMS is also non-compliant because it’s expecting raw values for score. It’s a workaround.

Conclusion

We’ve seen that Adobe Captivate provides a robust solution for quickly developing online training solutions. But let’s not forget that we need to be mindful of the implementation of the SCORM specification by the LMS and how it’s going to react to our Captivate lessons.

Review the problems and their solutions and you can be sure that your Captivate lessons are going to comply with SCORM 1.2 and function properly when loaded into a SCORM 1.2-compliant learning management system.

Good info. I have a question:

I don’t want to use a quiz slide, but a regular slide and want to perform following tasks. I have set the reporting parameters to SCORM1.2 in preferences.

Capture a free text on 2 different “Text Entry Boxes” , favorite food and favorite city of user (Not using short answer quiz slide)

Submit the answers to LMS on click of a button, that executes javascript.

This is the javascript code that I have written and not sure about it

var v_food = window.cpAPIInterface.getVariableValue(“food”);

var v_city = window.cpAPIInterface.getVariableValue(“city”);

SCORM_CallLMSSetValue(‘cmi.interactions.0.LMS_food’, v_food);

SCORM_CallLMSSetValue(‘cmi.interactions.0.LMS_city’, v_city);

The whole idea is to transmit custom generated data to LMS and retrieve the same as needed.

Any help is highly appreciated.

Did you manage to do this?

I am shocked that captivate doesn’t allow (or make it very difficult) to record user entered data. Surely this is the main purpose of such software?

Later versions of the SCORM packages appear to not have this problem. But you are exactly right… this is the entire point of the software.

I’m not sure if this is the best place to ask, but our client has a series of SCORM v1.2 courses. Within these courses, there is a click-box that covers the entire screen at the end of the course that the user can’t help but click. When they do, they are given a score of 1 (the pass mark of the course) which should tell the LMS that they have completed the course.

And it works. 98% of the time. But for a few random users on a few random courses, it simply doesn’t work. The user is stuck at the end of the course and unable to complete it despite repeatedly pressing the button (the button also has a bit of Javascript to refresh the browser – and this still works).

I could understand it if the button never worked, but the fact it nearly always works but occasionally doesn’t means I’m at a loss to understand what’s going on or recreate the problem. Any ideas? 🙂

We have seen this before and there are a few thoughts.

1. What system are you hosting the packages on?

2. Are you sure that the users actually clicked the finish event, rather than closing out the browser?

3. You can sometime get 100% assured clickthrough by tempting users. “Click here to see result”. etc

4. Have you created the packages with Captivate?

5. Does your LMS platform allow you to host SCORM 2004?

Hi guys,

Thanks for getting back to me. I’ve emailed you privately with the answer to your first question (especially since the issue may not be connected to the LMS), but here is my response to your other questions.

2. Definitely sure. The LMS we use lets us “log in” as the user so we can experience what the user is experiencing. However, because this problem only happens occasionally, we can’t recreate it “from scratch”. If we re-enrol the user on the course and they take it again, then it works.

3. As above. When we log in as the user, we can experience the same problem as the user. We just can’t create it “from scratch” as it appears to happen almost randomly (on different courses to multiple users in various global locations).

4. Yes. Captivate 2019. Published using SCORM 1.2. Happy to send you an example if that would help!

5. Yes it does. Obviously if that would solve the problem then that would be great, but there are quite a lot of courses to update / re-upload, so it would be useful to know if this is a definite solution!

Thanks very much!

Thanks for the reply.

So the reason we are familiar with this, is that we have experienced the exact same thing with multiple LMS systems. And the solution effectively is that there is no solution other than to change the combination of the LMS system or SCORM package.

We have been able to replicate it multiple times with multiple systems but the one thing they all have in common is that they are using captivate SCORM 1.2. So you are likely correct in your assertion that it is not the LMS system that is at fault. That being said, technically speaking it’s probably not the captivate SCORM 1.2 that is at fault either. Other than to say that the system that SCORM 1.2 uses is kind of dodgy.

There is a great article that explains the 2004 standard vs 1.2 here. https://www.elearningfreak.com/how-to-explain-aicc-scorm-12-and-scorm-2004-to-anyone/

When you start to think of the standard along the lines of Windows 95 versus Windows XP. In our testing, the SCORM 2004 packages of the same content did not experience the problems you describe. Some LMS systems do not support 2004, which is why I asked. We have had several enquiries around this article and helped many organisations resolve this problem. As a hosting provider for LMS systems, we have even helped some organisations migrate their content from an LMS system that could not support SCORM 2004 into a platform that could and subsequently resolved the issue.

It’s definitely a strange one, as it is virtually impossible to replicate but happens often enough that with keystroke recording we were able to verify that the correct logout process was followed and the LMS system was never passed the completion parameter to register the success of the course. Super annoying and we must have waisted weeks of our life verifying the problem, contacting the various LMS developers and Adobe Captivate team.

What we were able to work out was… The problems required a combination of Captivate and some LSM systems. While individually Captivate is not the problem, nor the LMS, a combination of both appears to cause the issue.

If you have to stick with Captivate SCORM 1.2, then Moodle or Canvas appears the most robust LMS that we host and have tested.

Talking with Adobe some years ago about this issue, it was clear that they have 0 intention of debugging and developing an average standard that has been replaced many years ago.

If you do need a hosted LMS system, please contact us and we would be happy to give you a test bed to play with.

Also, if you are updating to SCORM 2004. Progressively and testing along the way is advised.

That’s exceedingly useful thank you. I’ll try with other SCORM formats and get back to you.